Certification Examinations - General Information

You may also be interested in the How To Apply For A Certification Examination or the Examination Policies page.

About the National Registry Certification Examinations

The National Registry’s examinations are administered in two formats: Computerized Adaptive Testing (CAT) and Linear Computer-Based Testing (CBT). The National Registry uses CAT for Emergency Medical Responder (EMR), Emergency Medical Technician (EMT), and Paramedic (NRP) examinations. Advanced EMT (AEMT) examinations are administered in a linear CBT format.

A passing standard, identical for all candidates at their level of certification, is used to determine whether a candidate is successful or unsuccessful on the examination.

The National Registry uses the same process to develop all test items. First, external subject matter experts draft an item, also known as a test question. Next, the item goes through an extensive review process to ensure:

- Correct responses are correct.

- The item is accurate.

- The question is current.

- The content is clinically relevant.

- Incorrect responses are not partially correct.

This review process includes internal and external subject matter expert review, referencing, and editing.

Items are then pilot tested during live examinations. The National Registry collects enough data from candidate responses to pilot items to estimate each item’s level of difficulty and evaluate the item for evidence of bias. The National Registry does not count responses to piloted items towards the candidate’s score. Piloted items that do not meet the National Registry’s strict standards for calibration are revised and re-piloted or discarded. The National Registry places only items that meet rigorous standards as scored questions on the examination.

The difficulty statistic of an item identifies the “ability” necessary to answer an item correctly. The level of ability required to respond to an item correctly may be low, moderate, or high, depending on the estimated difficulty of the test question.

About the Minimum Passing Standard

The minimum passing standard is the level of knowledge or ability that a competent EMS provider must demonstrate to practice safely. The National Registry Board of Directors sets the passing standard following the development of new examination versions aligned to new practice analysis studies and subsequent revisions to examination specifications. A recommendation from a panel of experts and providers from the EMS community informs the Board’s actions. Psychometricians, experts in testing, facilitate the panels. The panel uses various recognized methods (such as the Angoff method) to assess how a minimally competent provider would respond to examination items. Panel assessments are combined to form a recommendation on the minimum passing standard for the exam. The Board considers this recommendation and the impact on the community to set the minimum passing standard.

Pilot Questions

During National Registry exams, every candidate receives pilot questions that are indistinguishable from scored items. Examinations do not factor pilot questions into a candidate’s performance. The number of pilot items included on each exam is detailed below:

- EMR: 30 items

- EMT: 10 items

- AEMT: 35 items

- Paramedic: 20 items

Computerized Adaptive Testing (CAT)

CAT examinations are delivered in a different manner than fixed-length examinations such as computer-based linear tests and pencil-paper tests. Candidates should not be concerned about the difficulty of an item on a CAT examination. The examination is scored differently than a fixed-length examination. All items are placed on a standard scale to identify where the candidate falls within the scale. As a result, candidates should answer all items to the best of their ability. Let’s use an example to explain this:

A candidate is trying out to be a high jumper on the track team. To make the team, the candidate must be able to jump over a bar that is four feet above the ground, and the coach must be 95% certain that the candidate can consistently jump four feet or higher.

A candidate is trying out to be a high jumper on the track team. To make the team, the candidate must be able to jump over a bar that is four feet above the ground, and the coach must be 95% certain that the candidate can consistently jump four feet or higher.

At tryouts, the bar is first set to three feet six inches. If the candidate successfully jumps over the bar, the next bar will be set to three feet nine inches. The bar will continually be raised for subsequent jumps until the candidate can no longer jump over the bar. Once the candidate fails to jump over the bar, say, at four feet six inches, the bar will be lowered to four feet three inches for the next jump.

The purpose of raising the bar after successful jumps and lowering it after failures is to precisely determine the maximum height the candidate can jump. Once the coach is 95% certain of the candidate’s ability to jump four feet or higher, the tryout ends, and a pass/fail decision is made. If the candidate fails to jump four feet or higher, and the coach is 95% certain that the candidate cannot consistently make this jump, the candidate fails and does not make the team. If the candidate consistently jumps four feet or higher, and the coach is 95% certain that the candidate can consistently jump at least four feet, the candidate passes and makes the team.

The coach finds that seven of ten athletes clear the bar at the four-foot level. The coach will then raise the bar to 4 feet 3 inches and later to 4 feet 6 inches, increasing the height of the bar until the coach determines the maximum individual ability of each athlete. This process informs the coach about the athletes’ ability based on a standard scale (feet and inches). The coach then sets a standard (4 feet) for membership on the team, based upon his knowledge of what is necessary to score points at track meets (the competency standard).

How a CAT Examination Works

The high jump analogy describes the way a CAT examination works.

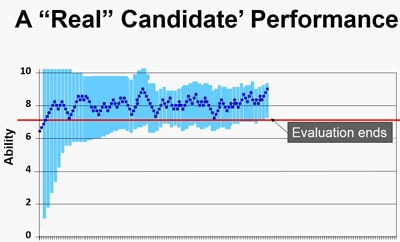

The test begins with an item that is slightly below the passing standard. The item may be from any subject area in the test plan. After the candidate gets a short series of items, the computer is able to determine the most appropriate level of difficulty of items that are best matched to the candidate’s level of ability. If the candidate gets subsequent items incorrect, it will administer an easier item. If the candidate gets items correct, it will administer slightly more challenging questions. These items will be selected from across the content areas. Thus, the computer evaluates the candidate’s ability level in real-time. The examination ends when there is enough confidence that the candidate is above or below the passing standard once the candidate responds to a minimum number of items.

A 95% Confidence is Necessary to Pass or Fail a CAT Exam

The computer stops the exam at the minimum number of items in the following situations:

- There is 95% confidence the candidate is at or above the passing standard.

- There is 95% confidence the candidate is below the passing standard.

- The candidate has reached the maximum allotted time.

The length of a CAT examination is variable. A candidate can demonstrate a level of competency at the minimum number of items. Candidates closer to entry-level competency need to provide the computer with more data to determine with 95% confidence that they are above or below the passing standard. The examination continues to administer items in these cases. Each item provides more information to determine if a candidate meets the passing standard. Test items will vary over the content domains regardless of the length of the examination.

The ability estimate of that candidate is most precise at the maximum length of the examination. The computer needs this level of precision for candidates with abilities close to the passing standard. As in the high jump analogy, the computer will be able to determine those who jump 3 feet 11 inches from those who jump 4 feet 1 inch.

Some candidates will not be able to jump close to four feet. These candidates are below or well below the entry-level of competency. Once the minimum number of items have been administered, the examination can confidently determine when candidates are below the passing standard and their examinations will end.

A candidate taking a CAT examination needs to answer every question to the best of their ability. The CAT exam provides precision, efficiency, and confidence that a successful candidate meets the definition of entry-level competency and can be a Nationally Certified EMS provider.

Linear Examinations

The AEMT examination is administered as a fixed-length linear computer-based test (CBT). The same number of items are administered to all candidates, although the items are not identical. Candidates select their answer and can change it prior to advancing to the next item. Candidates must provide an answer to each item. After the answer is submitted, candidates are unable to return to the item to modify their answer. Therefore, candidates are encouraged to answer each item to the best of their ability before submitting their answer.

What does the National Registry include in the examination?

The National Registry develops examinations that measure the essential aspects of out-of-hospital practice. To facilitate this, the National Registry performs a practice analysis to identify the tasks used in clinical care and the knowledge, skills, and abilities needed to perform those tasks. The practice analysis involves evaluating millions of EMS runs generated by thousands of EMS agencies and the input of over 3,500 subject matter experts. Next, psychometricians use this information to develop the test plan, which includes identifying content domains. Finally, subject matter experts create test questions based on the test plan. This process links examination content to EMS practice and establishes content validity. The National EMS Scope of Practice Model, the National EMS Education Standards, and the National Registry Practice Analysis guide content development.

EMS education programs are encouraged to review the current National Registry practice analysis when teaching courses and as a part of the final review of students’ abilities to correctly deliver the tasks necessary for competent patient practice.

The content areas covered in the National Registry examinations at the EMR, EMT, AEMT, and Paramedic levels are shown below.

| Content Area | EMR (90-110 items) |

EMT (70-120 items) |

Advanced EMT (135 items) |

Paramedic (110-150 items) |

| Airway, Respiration & Ventilation | 18%-22% | 18%-22% | 9%-13% | 8%-12% |

| Cardiology & Resuscitation | 20%-24% | 20%-24% | 11%-15% | 10%-14% |

| Trauma | 15%-19% | 14%-18% | 7%-11% | 6%-10% |

| Medical/Obstetrics/Gyn | 27%-31% | 27%-31% | 25%-29% | 24%-28% |

| EMS Operations | 11%-15% | 10%-14% | 6%-10% | 8%-12% |

| Clinical Judgment | 31%-35% | 34%-38% |

At each level, examination questions related to pediatric patient care are integrated throughout all content areas except EMS Operations.

National EMS Practice Analysis

The goal of licensure and certification is to assure the public that individuals who work in a particular profession have met specific standards and are qualified to engage in EMS care (American Educational Research Association, American Psychological Association, and National Council on Measurement in Education, 1999). The National Registry bases certification and licensure requirements on a candidate’s ability to practice safely and effectively to meet the goal of measuring competency accurately, reliably, and with validity (Kane, 1982). Therefore, the National Registry examination development uses a practice analysis as a critical component in the legally defensible and psychometrically sound credentialing process.

The primary purpose of a practice analysis is to develop a clear and accurate picture of the current practice of a job or profession, in this case, the provision of emergency medical care in the out-of-hospital environment. The results of the practice analysis are used throughout the entire National Registry examination development process to ensure a connection between the examination content and EMS practice. The practice analysis helps answer the questions, “What are the most important aspects of practice?” and “What constitutes safe and effective care?” It also enables the National Registry to develop examinations that reflect the contemporary, real-life practice of out-of-hospital emergency medicine.

The National Registry collects data about EMS practice that identifies tasks, knowledge, skills, and abilities. An analysis of the collected data provides evidence of the frequency and criticality of each identified task. The psychometrics team combines this weighted importance score for each of the five domains. The National Registry then uses the proportion represented by each area in the weighted importance score to set the blueprint (test plan) for the examinations.

Example Items

Below are some of the types of questions entry-level providers can expect to answer on the exam:

- A 24-year-old patient fell while skateboarding and has a painful, swollen, deformed lower arm. An EMT is unable to palpate a radial pulse. What should the EMT do next?

- Apply cold packs to the injury

- Align the arm with gentle traction

- Splint the arm in the position found.

- Ask the patient to try moving their arm

- An 86-year-old patient with terminal brain cancer is disoriented after a fall. The patient reports severe right hip pain. The spouse tells the EMT that the patient has DNR orders and does not want the patient transported. What should the EMT do next?

- Explain the risks of refusal of transport.

- Ask to see the patient’s DNR orders.

- Have the patient sign a refusal form.

- Request law enforcement intervention.

- Law enforcement officers have detained a patient who they believe is drunk. The officers called because the patient has a history of diabetes. An EMT administers oral glucose, and within a minute, the patient becomes unresponsive. What should an EMT do first?

- Perform chest compressions.

- Initiate rapid transport.

- Begin positive pressure ventilations.

- Suction the patient’s airway.

How are test questions (items) created?

The National Registry test development process is a complex integration of multiple teams, organizations, volunteers, and internal staff. The process combines data science, subject matter expertise, and various specialized and complex skills and competencies to create each question. A single test question takes approximately one year to create and costs roughly $1,800 to develop. The item development process is uniform across all National EMS Certifications.

Volunteers from the EMS community write test questions based on the test plan. Volunteers then submit the questions to the National Registry. The Examination team then performs several rounds of internal review where test questions are referenced and reviewed for clinical accuracy, grammar, and style. Next, a committee of external subject matter experts reviews each item for accuracy, correctness, relevance, and currency. Test questions are then reviewed again by internal staff for any final referencing needs or grammatical issues. The entire review process can take six months or longer from start to finish. The process ensures that:

- Every question is referenced to a task in the practice analysis.

- Each correct answer is correct, current, and accurate.

- Incorrect options are plausible and not partially correct.

- Commonly available EMS textbooks contain each answer.

Controversial questions are discarded or revised before piloting. The psychometrics team performs a reading analysis and evaluates each item for evidence of bias related to race, gender, or ethnicity.

All items are pilot tested. All candidates receive piloted items during their examinations. Piloted items are indistinguishable from scored items but do not count towards a candidate's score. The psychometrics team, experts in testing, collect this data and perform an item analysis after piloting. Psychometricians convert functioning and psychometrically sound items to scored test questions.

The National Registry reviews each test question continuously once it passes piloting for changes in performance. Any test question that drifts in performance is removed from the live examination, reviewed, revised, and repiloted.

The National Registry provides candidates who fail to meet entry-level competency information regarding their test performance compared to the passing standard. Studying the tasks, knowledge, skills, and abilities required to practice provides the best preparation.

Pearson VUE: The National Registry Test Provider

- Pearson VUE Professional Centers (PPCs) are testing centers owned and operated by Pearson and located in most urban areas.

- Pearson VUE Testing Centers (PVTCs) have a contractual relationship with Pearson VUE. These centers are generally located in smaller towns and rural areas. PVTCs are used to increase access to EMS testing in rural areas.

More information about OnVUE examinations can be found here.

The National Registry policy for online proctored examinations can be found here.

In some cases, the closest Pearson VUE test center is located in another state. Candidates can test at any authorized Pearson VUE test center in the United States at a convenient date, time, and location. The examination delivery process is the same regardless of where it is taken.

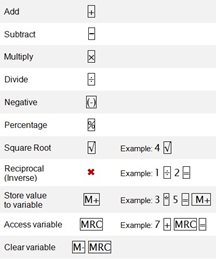

CERTIFICATION EXAMINATION ONSCREEN CALCULATOR

HELPFUL INFORMATION ABOUT THE EXAMINATION

All exam items evaluate the candidate’s ability to apply knowledge to perform the tasks required of entry-level EMS professionals. Questions answered incorrectly on the exam could mean choosing the wrong assessment or treatment in the field. There are some general concepts to remember about the examination:

Examination content reflects the National EMS Educational Standards. The National Registry avoids specific details with regional differences, including local and state variances such as protocols. Some topics in EMS are controversial, and experts disagree on the single best approach to some situations. Therefore, the National Registry avoids testing controversial areas.

National Registry exams focus on what providers do in the field. Item writers do not lean on any single textbook or resource. National Registry examinations reflect accepted and current EMS practice. Fortunately, most textbooks are up-to-date and written to a similar standard, but no single source thoroughly prepares a candidate for the examination. Candidates are encouraged to consult multiple references, especially in areas in which they are having difficulty.

A candidate does not need to be an experienced computer user or have typing experience to take the computer-based exam. The National Registry designed the computer testing system for people with minimal computer experience and typing skills. A tutorial is available to each candidate before taking the examination.

An on-screen calculator is available on all National Registry examinations.

PREPARING FOR THE EXAMINATION

Here are a few simple suggestions that will help you to perform to the best of your ability on the examination:

- Study your textbook thoroughly and consider using the accompanying workbooks to help you master the material.

- Thoroughly review the current American Heart Association’s Guidelines for Cardiopulmonary Resuscitation and Emergency Cardiovascular Care. You will be tested on this material at the level of the exam you are taking.

- The National Registry does not recommend a particular study guide but recognizes that they can be useful. Study guides may help you identify your weaknesses but should be used carefully. Some study guides have many easy questions leading some candidates to believe that they are prepared for the exam when more study is warranted. If you choose to use a study guide, we suggest that you do so a few weeks before your actual exam. You can obtain these from your local bookstore or library. Use the score to identify your areas of strength and weakness. Re-read and study your notes and materials for the areas you did not do well in.

- The National Registry does not provide candidates with information about their specific deficiencies.

The Night Before the Examination

- Do not wait until the night before the exam to begin studying. There will not be enough time to review if you encounter a topic you do not think you know well. This process will only create a stressful situation.

- Get a good night’s sleep

The Day of the Examination

- Eat a well-balanced meal.

- Arrive at the test center at least 30 minutes before the scheduled testing time.

- The identification and examination preparation process takes time.

- A candidate may need this time to review the tutorial on taking a computer based test.

- Arriving early will reduce stress.

- Be sure to have the proper identification as outlined in the confirmation materials before heading to the test center.

- A candidate will not be able to take the exam if they do not have the proper form of identification.

- Relax. Thorough preparation and confidence are the best ways to reduce test anxiety.

During the Examination

Take time to read each question carefully. The National Registry constructed its examinations to allow plenty of time to finish. Most successful candidates spend about 30 – 60 seconds per item reading each question carefully and thinking it through.

- Fewer than 1% of candidates are unable to finish the examination. Thus, the risk of misreading a question is far greater than your risk of running out of time.

- Do not get frustrated. Everyone will think the examination is difficult because of the adaptive nature of the CAT examination. The CAT algorithm adjusts the examination to a candidate’s maximum ability level, so a candidate may feel that all items are difficult. Instead, focus on one question at a time, do the best on that question and move on.

After The Examination

- Examination results are not released at the test center or over the telephone.

- Examination results will be posted to a candidate’s National Registry account within three business days following the completion of the examination, provided the candidate has met all other registration requirements.

- Candidates should log into their account and click on “Dashboard” or “My Application > Application Status” to view examination results